Context

Keeping up with IT news is getting harder, and AI made it worse. There is too much to follow, too many sources, and too many updates hitting at the same time.

I wanted one place to get the daily flow of DevOps, AI, and security updates without jumping between ten sites and trying to remember what I had already seen. RSS is still the simplest way to put that into one stream. The problem is that most RSS automation workflows just pull everything and do not check whether an article was already processed. They push links to a channel and call it a day. On the next run it starts repeating itself. First it is a few duplicate links and a bunch of junk you were never going to read. Then it turns into spam, and you end up with something you do not even want to use.

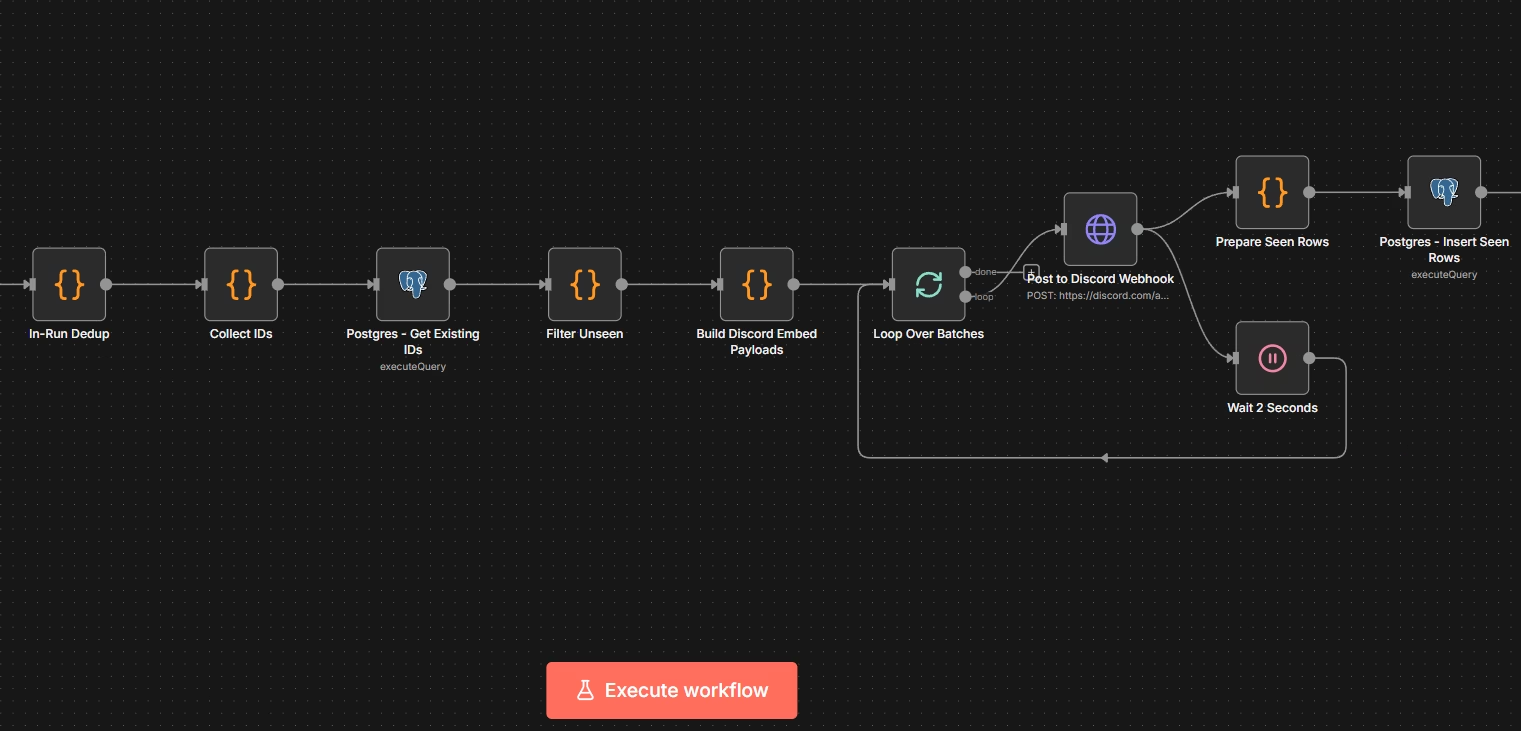

That is why I built this workflow in n8n. It parses selected RSS feeds, filters for relevant DevOps, AI, and security topics, and stores each matching article in a stateful database. n8n was the obvious choice because it is fast to build in, easy to follow in a visual workflow, and simple to adjust later when the source list or filtering logic needs to change. The main goal was to keep the daily digest clean over time. A nice side effect is that even if you rerun the workflow, it only sends new matching articles instead of reposting the same links again.

Overview

This workflow collects daily news from selected DevOps, AI, and security sources and posts one digest to Discord.

For DevOps and AI, it pulls from sources such as AWS, CNCF, Kubernetes, InfoQ, The New Stack, Cloudflare, and Ars Technica. For security, it includes The Hacker News and Bleeping Computer. I picked those because they are the sites I already read, and the source list is meant to be adjusted to your own needs.

The main point of the workflow is not RSS parsing. Anyone can glue feeds together in a few minutes. The useful part is producing one digest that stays relevant over time instead of slowly degrading into repeated links and low value spam.

Why stateful

Without memory, a daily digest becomes repetitive very quickly.

This workflow keeps track of processed article IDs between runs and uses that state to skip links that were already sent earlier. That keeps the digest clean and stops it from reposting the same feed items again and again.

That is the whole reason for making it stateful. A scheduled workflow that forgets everything after each run becomes noisy very fast.

Filtering

The workflow filters for topics related to DevOps, AI, and security.

That includes areas such as Kubernetes, containers, Terraform, observability, CI/CD, cloud platforms, LLMs, RAG, MLOps, vulnerabilities, CVEs, IAM, malware, ransomware, and supply chain security. The goal is to keep the digest focused on things that are actually useful for platform, cloud, AI, and security work.

There is also a small exclusion list to cut obvious junk.

Prerequisites

To use it, you need an n8n instance, PostgreSQL as the n8n backend, a small table for storing processed feed item IDs, a Discord webhook, and the source list and filters.

PostgreSQL is used for state tracking, so we are reusing the same backend database many n8n deployments already have in place.

The workflow file is available below as a direct download. Import it into n8n (Create Workflow> Import Worfklow from File..), review the source list and filters, and update any environment specific settings before using it. Limit 50 is just there so on first run you dont get too many posts in discord.

Setup

Before importing the workflow, create a table for processed feed item IDs.

Then in n8n, create a PostgreSQL credential with access to that table, and create a Discord webhook for the target channel.

Then import the workflow, assign the PostgreSQL credential to the database nodes “Postgres - Get Existing IDs” and “Postgres - Insert Seen Rows”, review the source list and filters, and replace the placeholder webhook url with real one in “Post to Discord Webhook” node.

Table creation SQL:

CREATE TABLE IF NOT EXISTS rss_seen_items (

item_id text PRIMARY KEY,

link text,

source text,

pub_date timestamptz,

first_seen_at timestamptz NOT NULL DEFAULT now()

);Result

The workflow sends one Discord message per 10 articles. When there are more than 10, it waits 2 seconds before sending the next batch. Without that delay, Discord can deliver the second batch before the first one and the order gets messed up.